- All articles

Is AI Sustainable? The Hidden Environmental Cost of Artificial Intelligence

By Ketul

Updated 06 March, 2026

10 min read

Contents

Artificial intelligence has quickly become one of the defining technologies of the digital era. AI systems now power search engines, recommendation algorithms, language models, fraud detection tools, and scientific research platforms. Governments, businesses, and researchers are increasingly relying on machine learning systems to analyse large datasets and automate complex tasks.

Because AI operates through software interfaces and cloud platforms, it often appears weightless and invisible. But the systems enabling artificial intelligence are deeply physical. Behind every AI model lies a network of data centres, high-performance processors, electricity infrastructure, and cooling systems that allow digital computation to take place.

As the scale of artificial intelligence expands, researchers and policymakers have begun examining the environmental implications of these technologies. Training advanced AI models requires enormous computational resources, and the infrastructure supporting them consumes significant amounts of energy and water.

Evidence discussed in the Stanford AI Index Report shows that the computational resources required for modern AI development have grown dramatically over the past decade. At the same time, the global expansion of digital infrastructure continues to increase electricity demand from data processing systems.

This does not mean that artificial intelligence is inherently unsustainable. AI technologies are also being used to improve climate modelling, optimise energy systems, and monitor environmental change. However, understanding both the benefits and the environmental costs of AI is essential as the technology becomes more deeply embedded in modern economies.

To understand whether AI can be sustainable, it is necessary to examine the infrastructure and resources that power artificial intelligence systems.

Why Artificial Intelligence Requires Massive Computing Power

Modern AI systems rely on machine learning models that process large datasets to identify patterns and make predictions. These models are typically trained using neural networks, which simulate interconnected layers of computation that learn from data over time.

Training such systems requires repeating mathematical calculations millions or billions of times as models adjust their internal parameters. The process demands specialised hardware designed to perform highly parallel computations.

The scale of this computational effort has increased rapidly. Research tracking machine learning development, including work from OpenAI’s analysis of AI compute trends, shows that the computing power required for training leading AI models has historically grown at an extremely fast pace as models become larger and more complex.

To support this computational intensity, companies deploy clusters of graphics processing units and other specialised chips capable of handling large volumes of calculations simultaneously. These processors operate continuously during model training, often for extended periods of time.

Academic discussions published in Nature Machine Intelligence note that the increasing size of machine learning models has significantly expanded the computing resources required for AI development.

Each additional layer of complexity requires more processing power, more hardware, and ultimately more energy.

The Growing Energy Footprint of Artificial Intelligence

Because AI relies on intensive computation, energy consumption has become one of the central environmental concerns associated with artificial intelligence.

AI workloads are typically executed within data centres, where large numbers of servers perform the calculations required for machine learning models and digital services. These facilities run continuously, consuming electricity to power computing equipment as well as cooling systems that prevent hardware from overheating.

According to analysis from the International Energy Agency, data centres and data transmission networks together account for a growing share of global electricity demand as digital services expand.

Some research has attempted to estimate the energy required to train large AI models. A widely cited study conducted at the University of Massachusetts Amherst suggested that training certain large natural language processing models could generate substantial carbon emissions depending on the energy source used.

These estimates vary widely depending on factors such as model size, training duration, hardware efficiency, and the carbon intensity of electricity used by data centres.

What remains clear is that AI development increasingly intersects with energy systems. As models grow larger and more widely deployed, their electricity demand becomes an important consideration for sustainability.

Data Centres: The Physical Infrastructure Behind AI

Behind the algorithms and software interfaces associated with artificial intelligence lies a vast network of data centres.

Data centres are specialised facilities that house servers, storage systems, and networking equipment responsible for processing digital information. These facilities support cloud computing platforms, online services, and increasingly, AI model training and deployment.

Large technology companies operate hyperscale data centres that contain tens of thousands of servers. These facilities are designed to maximise computing capacity while maintaining stable operating conditions for sensitive hardware.

However, maintaining these environments requires significant energy. Servers generate heat as they perform computations, and cooling systems are required to maintain safe temperatures. Air conditioning systems, liquid cooling systems, and heat management technologies all contribute to the overall energy consumption of data centre operations.

Research discussed in Nature Climate Change highlights the importance of improving the energy efficiency of data centres and transitioning them toward renewable electricity sources to reduce their environmental impact.

Many technology companies have begun investing in renewable energy procurement and more efficient data centre designs. However, the rapid growth of digital infrastructure continues to raise questions about how computing demand will evolve in the coming decades.

The Water Footprint of Artificial Intelligence

Electricity consumption is often the most visible environmental cost of artificial intelligence, but cooling infrastructure introduces another important resource demand: water.

Data centres generate large amounts of heat when servers process intensive computational workloads. To maintain safe operating temperatures, many facilities rely on cooling systems that use water to absorb and dissipate heat.

Research from Microsoft’s sustainable computing program highlights how cooling systems represent a major operational challenge for high-performance computing environments.

Investigations reported by MIT Technology Review have also examined how large AI workloads can increase water consumption when data centres depend heavily on water-based cooling systems.

In regions already experiencing water stress, this raises questions about how digital infrastructure can be developed responsibly.

The Hardware Supply Chain Behind AI

Artificial intelligence also relies on an extensive hardware supply chain. Advanced AI models are trained and deployed on specialised processors manufactured through complex semiconductor fabrication processes.

Producing these chips requires raw materials, specialised chemicals, and highly energy-intensive manufacturing facilities. Semiconductor production also consumes large volumes of purified water.

The United Nations International Resource Panel has highlighted the environmental pressures associated with expanding digital hardware production, including resource extraction, material processing, and electronic waste.

Although individual chips and servers may appear small compared with heavy industrial equipment, the scale of global electronics production means their cumulative environmental impact is significant.

Can Artificial Intelligence Also Help the Planet?

Despite these environmental costs, artificial intelligence also has the potential to support sustainability efforts.

AI systems are increasingly used to analyse environmental data and improve decision-making in areas such as energy management, climate modelling, and ecosystem monitoring.

Environmental monitoring initiatives supported by organisations such as NASA Earth Science rely on machine learning techniques to analyse satellite imagery and environmental datasets.

Similarly, platforms such as Google Earth Engine enable researchers to track deforestation, monitor land use change, and study environmental trends using large geospatial datasets.

In these contexts, artificial intelligence can provide valuable tools for understanding and managing complex environmental systems.

The challenge is ensuring that the environmental benefits of AI applications outweigh the resource costs required to operate them.

Building More Sustainable Artificial Intelligence

As awareness of AI’s environmental footprint grows, researchers and technology companies are exploring ways to make artificial intelligence systems more sustainable.

One approach focuses on improving algorithm efficiency so that models require less computational power. Researchers are also developing hardware and infrastructure that reduce electricity consumption during training and deployment.

Another strategy involves powering data centres with renewable electricity. Many large technology companies have already committed to sourcing renewable energy for their global data centre operations.

The International Energy Agency notes that improving energy efficiency and expanding renewable power will be essential for reducing the environmental impact of the growing digital economy.

Transparency around the energy consumption of AI systems is also becoming an important area of discussion among researchers and policymakers.

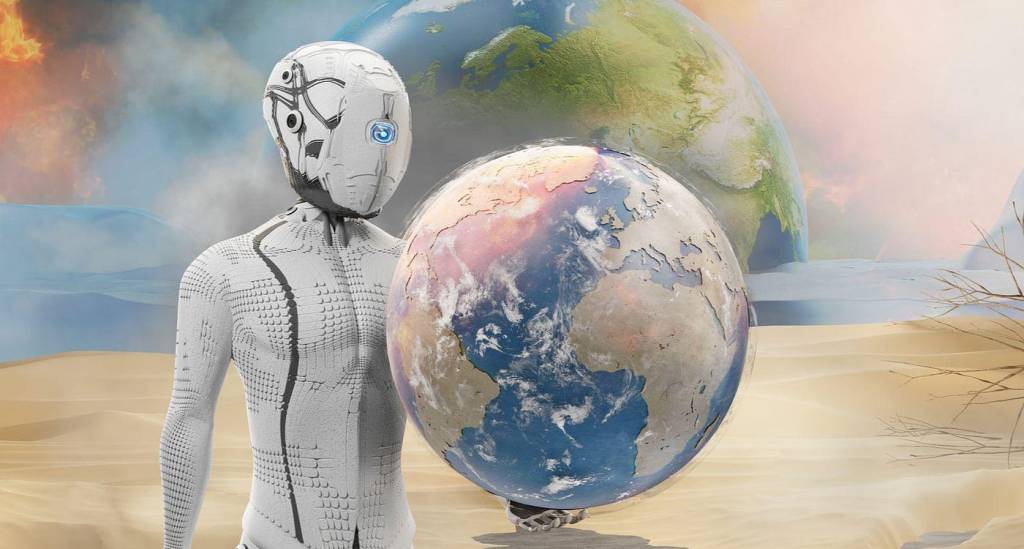

A Balanced View of AI and Sustainability

Artificial intelligence represents both an opportunity and a challenge for sustainability.

On one hand, the infrastructure required to train and deploy advanced AI systems consumes energy, water, and materials. As AI adoption grows, these resource demands may increase significantly.

On the other hand, AI technologies offer powerful tools for improving environmental monitoring, energy efficiency, and scientific understanding of climate systems.

Whether artificial intelligence becomes environmentally sustainable will depend on how it is designed, powered, and governed. Efficient algorithms, renewable-powered infrastructure, responsible hardware manufacturing, and transparent reporting can all help reduce its environmental footprint.

Digital technologies may operate in the virtual world, but their environmental impacts remain firmly connected to the physical one.

FAQs

1. Is artificial intelligence environmentally sustainable?

Artificial intelligence is not inherently sustainable or unsustainable. Its environmental impact depends largely on how AI systems are developed and powered. Training large AI models requires significant computing resources, which consume electricity and water, but AI can also help optimise energy systems and support climate research.

2. Why does AI consume so much energy?

AI systems rely on complex machine learning models that require enormous computational power to process large datasets. Training these models involves running billions of calculations on specialised processors such as GPUs, which consume significant amounts of electricity in data centres.

3. How much electricity do AI data centres use?

Data centres that support AI and cloud computing consume substantial amounts of electricity. According to the International Energy Agency, data centres and data transmission networks together account for a growing share of global electricity demand as digital infrastructure expands.

4. Does artificial intelligence use water?

Yes. Many data centres use water-based cooling systems to prevent servers from overheating during intensive computing tasks. As AI workloads increase, cooling systems may require large volumes of water, particularly in regions where liquid cooling technologies are used.

5. What is the carbon footprint of AI models?

The carbon footprint of an AI model depends on factors such as its size, the computing resources required for training, and the source of electricity used by data centres. Training very large models can generate significant emissions if powered by fossil fuel-based energy.

6. Why are data centres important for artificial intelligence?

Data centres provide the computing infrastructure that allows AI systems to operate. They house thousands of servers and specialised processors that perform the calculations required for training and running machine learning models.

7. Can artificial intelligence help fight climate change?

AI can contribute to climate solutions by improving climate modelling, optimising energy grids, monitoring deforestation, and analysing environmental data from satellites. These applications can help scientists and policymakers better understand and manage environmental systems.

8. What are the environmental challenges of AI hardware production?

AI systems rely on specialised chips and electronic equipment that require resource-intensive manufacturing processes. Producing these components involves mining raw materials, energy-intensive semiconductor fabrication, and global supply chains that contribute to environmental impacts.

9. How can AI become more environmentally sustainable?

AI can become more sustainable through energy-efficient algorithms, renewable-powered data centres, improved hardware efficiency, and transparent reporting of the energy use associated with training large models.

10. Will AI increase global energy demand in the future?

As AI adoption expands across industries, demand for computing power and digital infrastructure is expected to grow. This could increase global electricity demand unless advances in efficiency and renewable energy help offset the additional energy consumption.

Related articles

A research review on the meat industry and its impact on the environment.